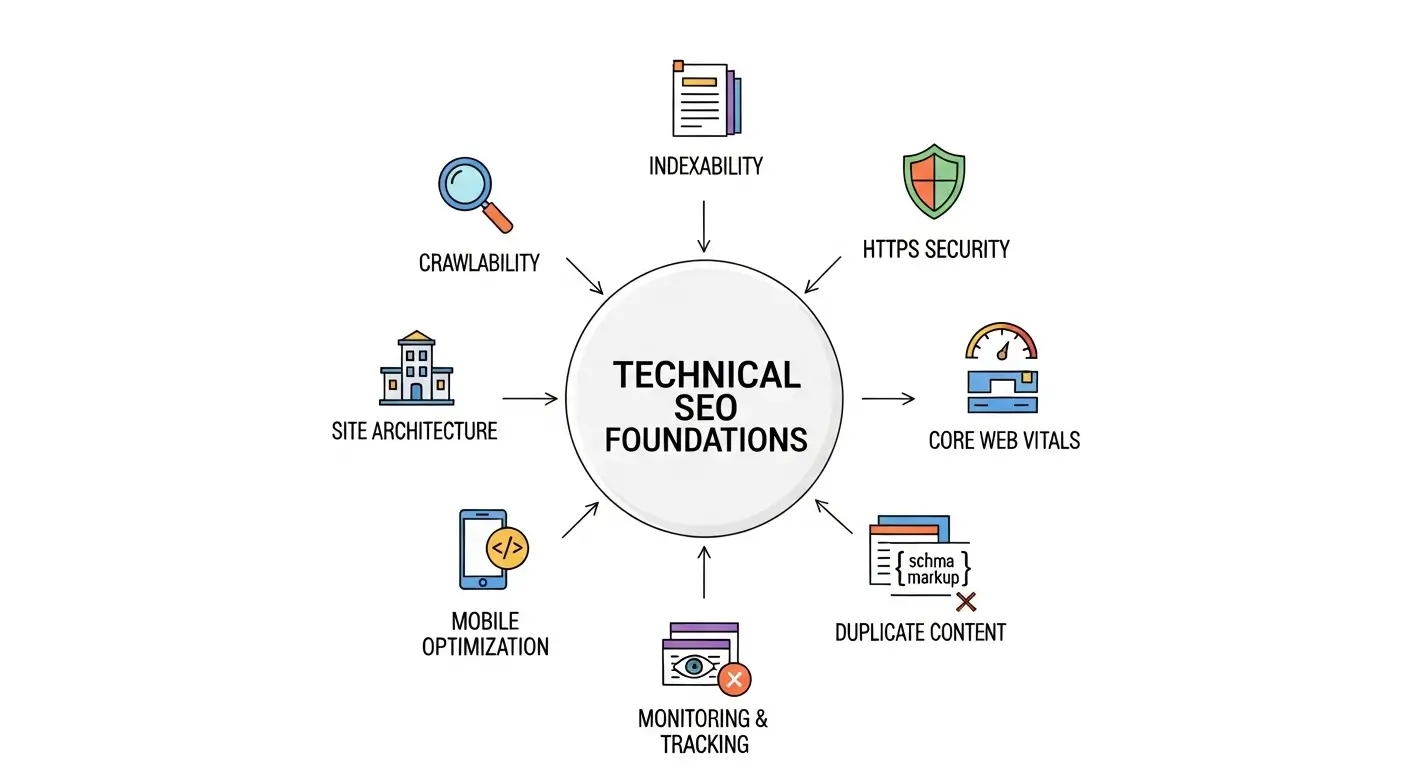

Technical SEO defines how search engines access, understand, and rank a website. A site can have strong design and content, but without a solid technical foundation it cannot perform well in search. Before launch, every website must ensure that crawlers can reach each page, data loads fast, and structure communicates clearly with search engines.

Technical SEO covers areas such as HTTPS security, site architecture, Core Web Vitals, mobile optimization, and structured data. Each of these elements builds the framework that supports visibility and user experience.

This foundation reduces crawl errors, improves index coverage, and increases ranking potential. Launching without it often leads to wasted content, weak performance, and missed growth opportunities.

Ensure Full Crawlability and Indexability

Search engines must be able to find, read, and index every important page. Crawlability defines how bots access your website. Indexability determines whether those pages appear in search results. Together they form the base of technical SEO performance.

robots.txt controls crawler access. Keep it open for key directories and restrict only private or duplicate paths. An XML sitemap lists all indexable URLs and helps search engines discover new pages quickly. Canonical tags signal the preferred version of similar content, preventing duplication. Redirect loops or broken links interrupt crawling and waste crawl budget.

When these systems align, search engines move through your site efficiently, index your content correctly, and deliver it to users searching for your services.

Use a Secure HTTPS Framework

Security is a direct ranking factor and a key trust signal for both users and search engines. A secure HTTPS setup ensures data integrity and authentic connections between browser and server. It also protects user interactions such as form submissions and payments.

Install a Valid SSL Certificate

HTTPS requires an SSL certificate issued by a trusted authority. Once installed, every page should load through HTTPS. Expired or misconfigured certificates trigger browser warnings and reduce trust.

Redirect HTTP to HTTPS

Set 301 redirects from all HTTP URLs to their HTTPS counterparts. This prevents duplicate versions and consolidates link equity into a single secure URL structure.

Fix Mixed Content Issues

Ensure that all scripts, images, and stylesheets load over HTTPS. Mixed content creates security risks and may block resources during crawling.

Build a Clean and Logical Site Structure

Site structure defines how pages connect and how easily users and search engines move through them. A well-organized structure improves crawl efficiency, user navigation, and link equity distribution.

Keep important pages within two or three clicks from the homepage. Use clear categories that reflect the website’s core services or topics. Every URL should stay short, lowercase, and descriptive, using hyphens to separate words.

Internal links guide both users and bots toward relevant content. Use descriptive anchor text that matches the topic of the destination page. Ensure that no important page remains isolated or hidden from the main navigation.

Strong architecture supports smooth crawling, faster indexing, and higher visibility across every level of the website.

Optimize for Speed and Core Web Vitals

Website speed directly influences user satisfaction, engagement, and rankings. Core Web Vitals measure how quickly a page loads, how stable it feels, and how soon it becomes interactive. These metrics reflect real user experience and determine overall technical performance.

Measure Core Web Vitals

Track Largest Contentful Paint (LCP), Cumulative Layout Shift (CLS), and Interaction to Next Paint (INP) using Google PageSpeed Insights or Lighthouse. Maintain LCP under 2.5 seconds, CLS under 0.1, and INP under 200 milliseconds.

Reduce Page Weight

Compress and resize images without losing quality. Minify CSS, JavaScript, and HTML files. Remove unused scripts and plugins that slow rendering.

Improve Delivery

Implement browser caching and serve assets through a Content Delivery Network. Reduce server response time with optimized hosting and database queries.

Fast, stable, and responsive pages create a smooth experience that keeps users engaged and signals strong technical health to search engines.

Implement Mobile-First and Responsive Design

Mobile usability defines how visitors interact with your website on different devices. Search engines evaluate performance primarily through mobile-first indexing, so every page must deliver a seamless experience on small screens.

Design layouts that adapt to all screen sizes using flexible grids and scalable images. Keep navigation simple and easy to tap with adequate spacing between buttons. Text should remain readable without zooming or horizontal scrolling.

Test your site with Google’s Mobile-Friendly Test tool. Identify issues such as overlapping elements, slow mobile load times, or blocked scripts. Fix them before launch to ensure full functionality across devices.

Consistent mobile performance improves accessibility, user retention, and search visibility for every visitor, regardless of device type or connection speed.

Add Structured Data and Schema Markup

Structured data helps search engines understand the meaning and relationships within your content. Schema markup translates on-page information into a format that crawlers can interpret accurately. This improves indexing clarity and can enhance search appearance with rich results.

Apply Organization Schema

Include structured data that defines your business name, logo, address, and contact information. This supports brand recognition and builds authority across search results.

Use Breadcrumb Schema

Show the hierarchy of your website’s pages through breadcrumb markup. It helps users navigate efficiently and allows crawlers to understand content depth.

Add Service and Review Schema

For agencies or service-based businesses, service and review schemas communicate expertise, offerings, and credibility. They can generate rating snippets or feature highlights directly in search results.

Structured data strengthens semantic relevance, improves visibility, and reinforces how search engines connect your website’s entities.

Eliminate Duplicate and Thin Content

Duplicate and thin content weaken crawl efficiency and dilute ranking signals. Search engines prefer unique, information-rich pages that serve a clear purpose. Cleaning weak or repeated material ensures that every indexed page contributes distinct value.

Identify duplicate URLs or pages through audit tools such as Screaming Frog or Google Search Console. Consolidate overlapping content with 301 redirects or canonical tags that signal the main version to index.

Remove or no-index low-value pages like archives, test URLs, or empty categories. Strengthen thin pages with unique copy, data, or visuals that expand topic coverage.

Set Up Monitoring and Tracking Tools

Tracking tools provide data that reveal how search engines and users interact with your website. Without them, technical issues remain hidden, and performance cannot be measured accurately.

Configure Google Search Console

Connect the website to Google Search Console to monitor index coverage, crawl status, and structured data validation. Review reports regularly to detect errors, warnings, or blocked pages.

Install Google Analytics 4 and Tag Manager

Implement GA4 to track user behavior, traffic sources, and conversion paths. Use Tag Manager for flexible event tracking without manual code edits.

Schedule Regular Technical Audits

Run monthly or quarterly audits with tools like Semrush or Screaming Frog to check for broken links, redirect chains, and speed regressions.

Maintain Ongoing Technical Health

Technical SEO does not end after launch. Websites evolve, and each change can create new performance or indexing issues. Regular maintenance keeps the technical foundation stable and prevents ranking loss over time.

Audit the site monthly to detect crawl errors, duplicate content, and speed drops. Review log files to understand how often search engines visit and where they encounter obstacles. Track changes in Core Web Vitals and fix performance regressions immediately.

Update structured data whenever services, products, or business details change. Resubmit updated sitemaps to ensure fresh indexing.

Launch Checklist Summary

Every website should go live only after its technical foundation is verified and stable. This checklist confirms that core systems function correctly and search engines can process the site without friction.

Essential items before launch:

- HTTPS fully active and tested on all pages

- robots.txt verified and free from accidental blocks

- XML sitemap created and submitted to Google Search Console

- Canonical tags implemented for duplicate prevention

- Core Web Vitals within target thresholds

- Mobile layout fully responsive and validated

- Structured data correctly added and tested

- Google Analytics 4 and Search Console configured

- All internal links functional and free of redirects

This checklist confirms that your website launches with a verified technical base that supports fast indexing, stable ranking, and lasting growth.

Conclusion

Technical SEO defines how well a website communicates with search engines. A strong foundation ensures that pages load fast, remain secure, and stay easy to crawl. Each element—from HTTPS to structured data—forms a connected system that supports visibility and user trust.

Websites that launch with clean code, optimized performance, and clear structure gain faster indexing and stronger ranking potential. Continuous monitoring maintains that advantage as the site grows.

Every decision during setup affects how search engines perceive quality and relevance. Building technical accuracy from the start saves time, prevents future errors, and creates a foundation that supports both users and long-term search success.

FAQ

What is the main goal of technical SEO?

Technical SEO ensures that search engines can crawl, index, and understand a website efficiently. It focuses on improving speed, structure, security, and accessibility so that content performs at its full potential in search results.

Why is crawlability important for a new website?

Crawlability defines how search engine bots access and move through a site. If crawlers face blocked URLs, broken links, or redirect chains, they cannot index pages properly. Ensuring full crawl access allows search engines to find every important page fast.

How do HTTPS and SSL certificates affect SEO?

HTTPS encrypts data between the user and the server, protecting sensitive information and building trust. Google treats HTTPS as a ranking factor, and browsers mark HTTP pages as insecure, which can lower engagement and conversions.

What are Core Web Vitals and why do they matter?

Core Web Vitals measure how users experience speed and stability on your website. They include Largest Contentful Paint (LCP), Cumulative Layout Shift (CLS), and Interaction to Next Paint (INP). Strong metrics lead to better user satisfaction and higher rankings.

How does site architecture improve SEO performance?

A clear site structure helps search engines understand the hierarchy and relevance of pages. Logical internal linking distributes authority, reduces crawl depth, and increases visibility for key service or content pages.

What type of structured data should a business website use?

A business website should implement Organization, Breadcrumb, Service, and Review schema. These structured data types help search engines identify your brand, understand page relationships, and display enhanced results such as ratings or service highlights.

How often should technical SEO audits be performed?

Audit your site at least once every quarter or after any major update. Regular audits catch crawl errors, speed drops, and indexing issues early, keeping the website stable and high-performing.

What tools help monitor technical SEO performance?

Essential tools include Google Search Console, Google Analytics 4, Lighthouse, Screaming Frog, and Semrush. These reveal crawl issues, performance metrics, and structured data validation errors.

How does mobile optimization influence search rankings?

Google indexes websites using mobile-first criteria. A mobile-optimized site ensures faster load times, better usability, and stronger engagement, which collectively boost search performance.

What happens if a website ignores technical SEO?

Ignoring technical SEO can lead to slow loading times, blocked pages, crawl errors, duplicate content, and poor index coverage. Even the best content cannot rank if search engines fail to access or understand it.